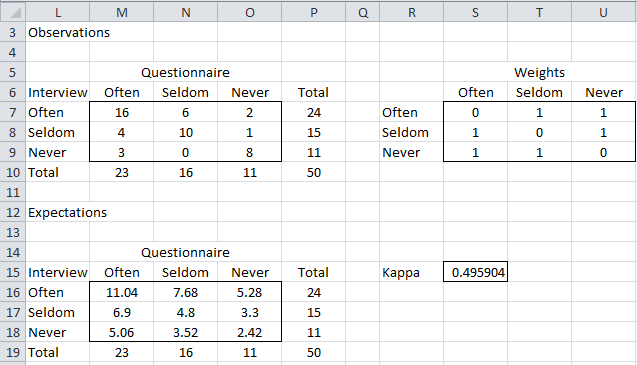

Reliability of Nominal Data Based on Qualitative Judgments - William D. Perreault, Laurence E. Leigh, 1989

The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

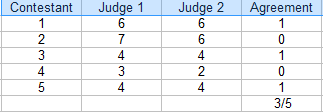

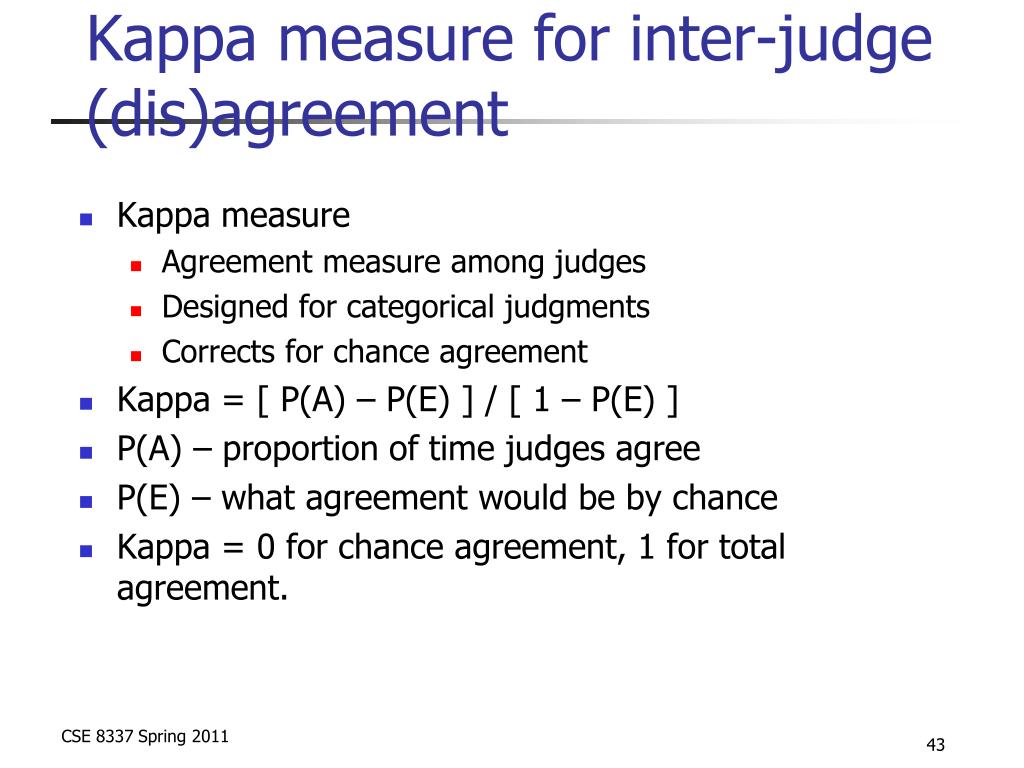

28. Kappa measure for Interjudge (dis)agreement for Accessing Relevance in Information Retrieval - YouTube

28. Kappa measure for Interjudge (dis)agreement for Accessing Relevance in Information Retrieval - YouTube

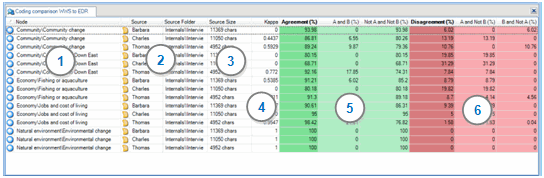

Inter-Annotator Agreement (IAA). Pair-wise Cohen kappa and group Fleiss'… | by Louis de Bruijn | Towards Data Science

28. Kappa measure for Interjudge (dis)agreement for Accessing Relevance in Information Retrieval - YouTube

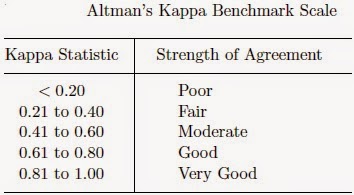

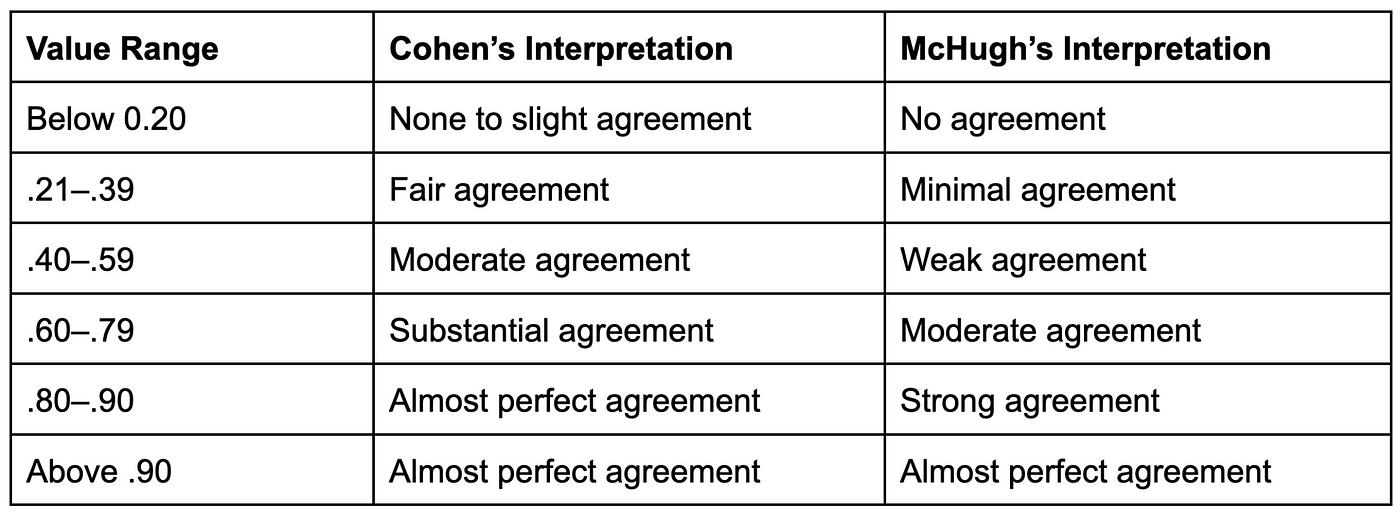

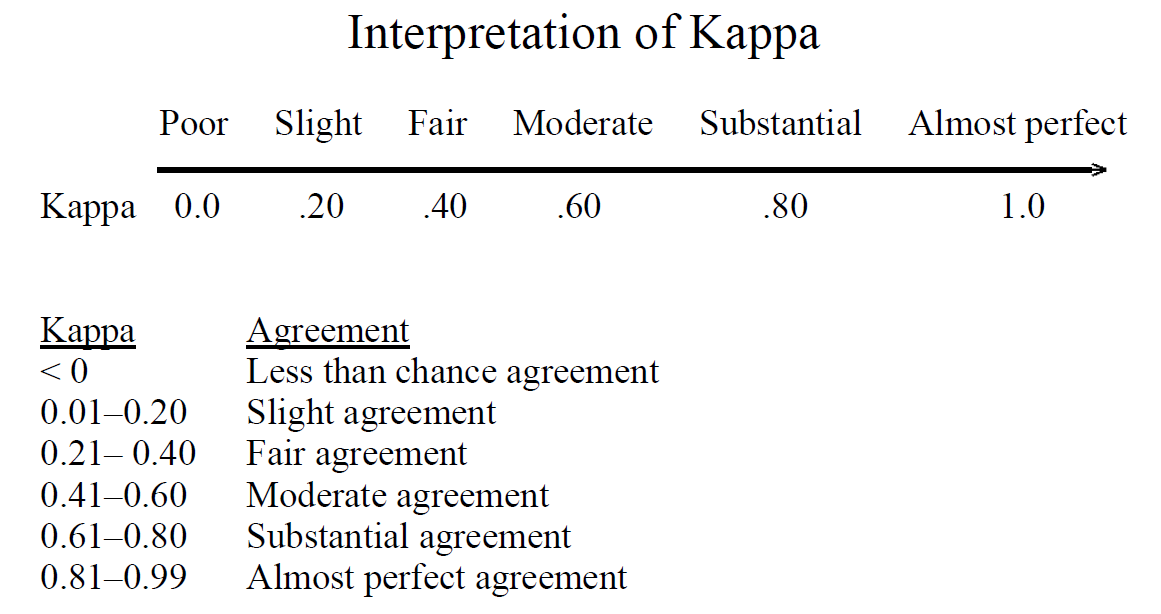

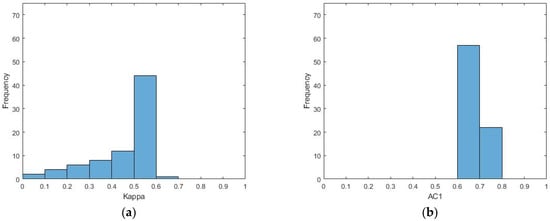

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters